Lakulish Antani, Dinesh Manocha

|

|

|

|

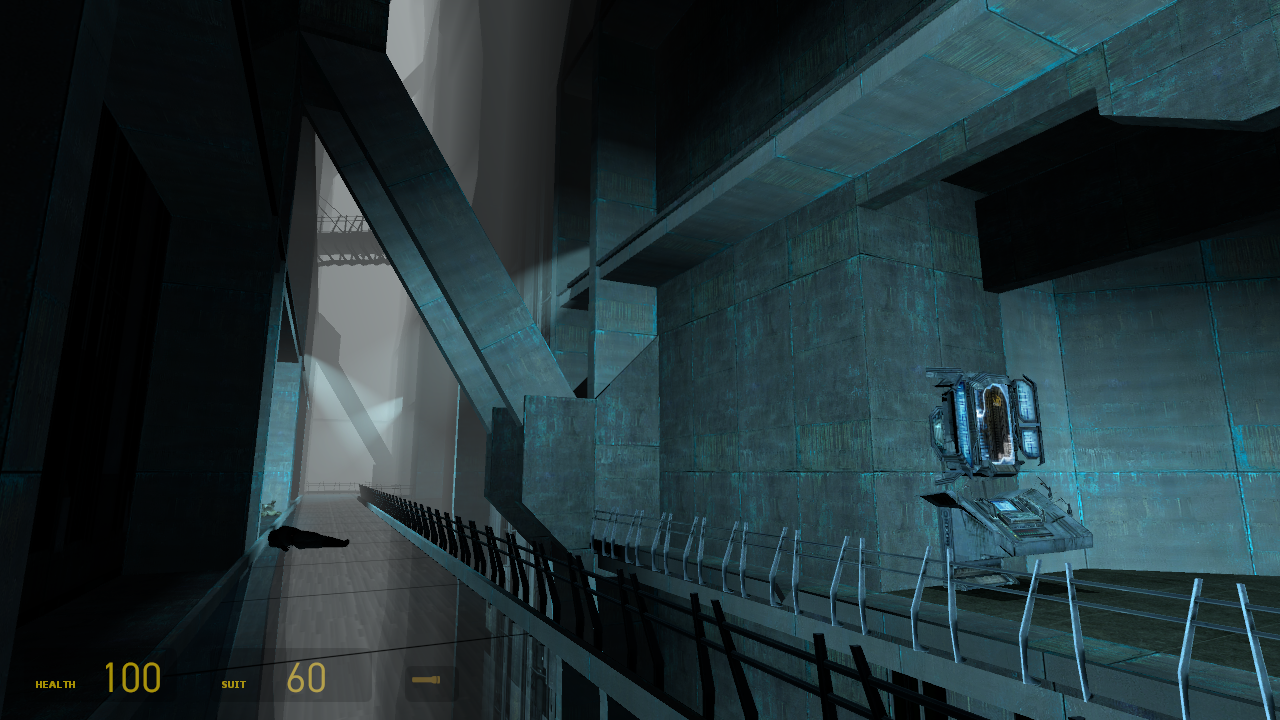

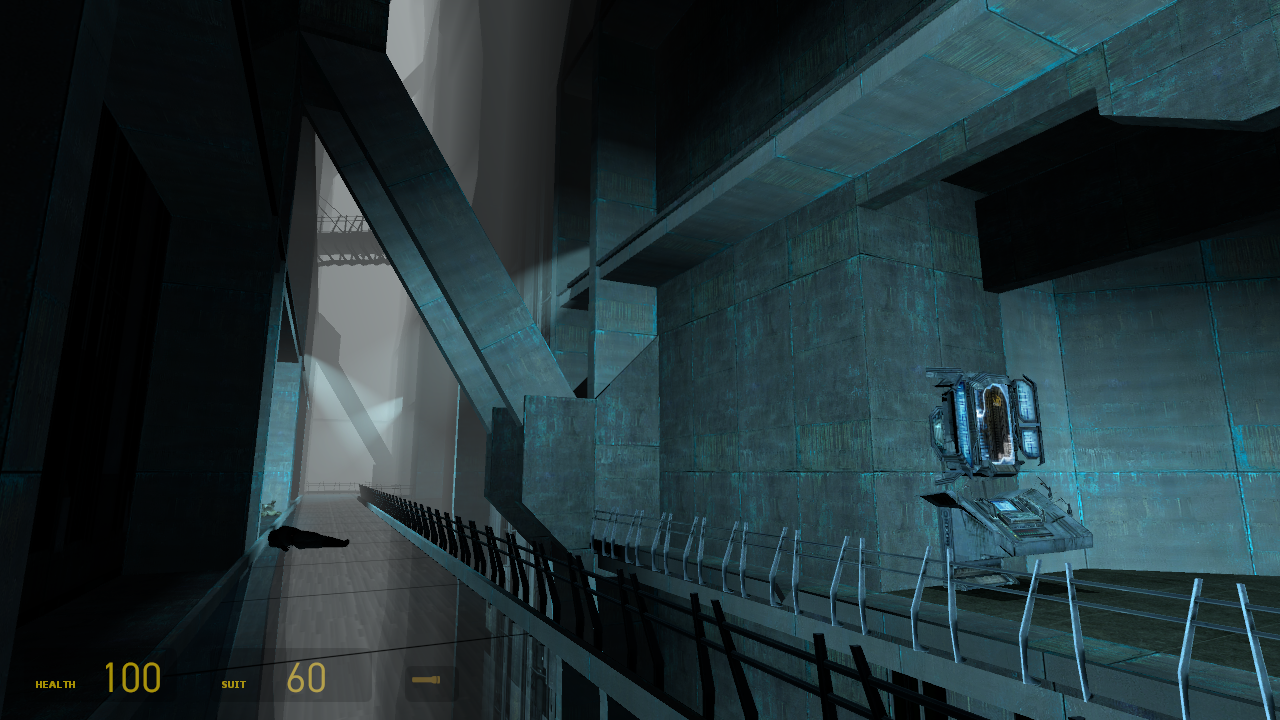

We present an efficient algorithm to compute spatially-varying, direction-dependent artificial reverberation and reflection filters in large dynamic scenes for interactive sound propagation in virtual environments and video games. Our approach performs Monte Carlo integration of local visibility and depth functions to compute directionally-varying reverberation effects. The algorithm also uses a dynamically-generated rectangular aural proxy to efficiently model 2--4 orders of early reflections. These two techniques are combined to generate reflection and reverberation filters which vary with the direction of incidence at the listener. This combination leads to better sound source localization and immersion. The overall algorithm is efficient, easy to implement, and can handle moving sound sources, listeners, and dynamic scenes, with minimal storage overhead. We have integrated our approach with the audio rendering pipeline in Valve's Source game engine, and use it to generate realistic directional sound propagation effects in indoor and ourdoor scenes in real-time. We demonstrate, through quantitative comparisons as well as evaluations, that our approach leads to enhanced, immersive multi-modal interaction.

We would like to thank the Army Research Office, the National Science Foundation, and Intel Corporation for their support; and Valve Corporation for permission to use the Source SDK and Half-Life 2 artwork.